THE WILD ROBOT

VFX IN ASIA • THE PENGUIN • UNSUNG HEROES OF SFX PROFILES: RACHAEL PENFOLD & TAKASHI YAMAZAKI

Welcome to the Fall 2024 issue of VFX Voice!

Thank you for being a part of the global VFX Voice community. We’re proud to be the definitive authority of all things VFX.

In this issue, our VFX Voice cover story celebrates The Wild Robot, the animated adventure film following Roz the robot’s journey of survival. We shine a light on the booming Asian VFX industry in a special focus feature. And we explore a multitude of VFX trends: the evolving role of SFX artists; the process of hiring VFX vendors; the generational impact of film history on today’s VFX practitioners; the dynamic use of CGI to enliven prehistoric creatures; and a vital sampling of amazing artwork that VFX artists have created in their spare time.

We share personal profiles of Godzilla Minus One filmmaker Takashi Yamazaki and One of Us Co-Founder Rachael Penfold. VFX Voice goes behind the scenes of HBO’s limited series The Penguin, delves into the latest in tech & tools around facial animation and real-time rendering for animation, and measures the impact of the latest mixed-reality VR headsets. We also put the VES Los Angeles Section in the spotlight, highlight VFX studios helping to foster work-life balance and more.

Dive in and meet the innovators and risk-takers pushing the boundaries of what’s possible and advancing the field of visual effects.

Cheers!

Kim Davidson, Chair, VES Board of Directors

Nancy Ward, VES Executive Director

P.S. You can continue to catch exclusive stories between issues only available at VFXVoice.com. You can also get VFX Voice and VES updates by following us on X (Twitter) at @VFXSociety.

FEATURES

8 VFX TRENDS: DOCUMENTING PREHISTORY

Photorealistic CGI captures iconic beasts in the wild.

14 TECH & TOOLS: FACIAL ANIMATION

Advancements break new ground with greater realism.

20 PROFILE: RACHAEL PENFOLD

One of Us Co-Founder calls humanity a key to success.

24 COVER: THE WILD ROBOT

Reviving an analog feel delivers a warmth and presence.

30 VFX TRENDS: UNSUNG HEROES

The changing role of SFX artists in defining visual reality.

36 PROFILE: TAKASHI YAMAZAKI

Godzilla Minus One director aims to keep audiences riveted.

40 TELEVISION/STREAMING: THE PENGUIN

Ruling the night and the devastated streets of Gotham City.

46 VFX TRENDS: HIRING VENDORS

Maturing selection process becomes more sophisticated.

50 V-ART: PERSONAL PERSPECTIVES

Industry talent lead rich creative lives outside of work.

60 SPECIAL FOCUS: VFX IN ASIA

The Asian VFX industry is experiencing a meteoric rise.

66 VFX TRENDS: GENERATIONAL CHANGE

VFX artists weigh how film history influenced their careers.

76 VR/AR/MR TRENDS: EMERGENT IMMERSIVE

Meta Quest 3 and Apple Vision Pro headsets power growth.

80 TECH & TOOLS: REAL-TIME RENDERING

Animators explore new techniques to spark innovation.

86 HEALTH & WELL-BEING: GETAWAYS

VFX studios help artists achieve a work-life balance.

DEPARTMENTS

2 EXECUTIVE NOTE

90 THE VES HANDBOOK

92 VES SECTION SPOTLIGHT – LOS ANGELES

94 VES NEWS

96 FINAL FRAME – THE POWER OF SFX

ON THE COVER: Shipwrecked on a deserted island, a robot named Roz must learn to adapt to its new surroundings in The Wild Robot (Image courtesy of DreamWorks Animation and Universal Pictures)

VFXVOICE

Visit us online at vfxvoice.com

PUBLISHER

Jim McCullaugh publisher@vfxvoice.com

EDITOR

Ed Ochs editor@vfxvoice.com

CREATIVE

Alpanian Design Group alan@alpanian.com

ADVERTISING

Arlene Hansen Arlene-VFX@outlook.com

SUPERVISOR

Ross Auerbach

CONTRIBUTING WRITERS

Naomi Goldman

Trevor Hogg

Chris McGowan

Barbara Robertson

Oliver Webb

ADVISORY COMMITTEE

David Bloom

Andrew Bly

Rob Bredow

Mike Chambers, VES

Lisa Cooke

Neil Corbould, VES

Irena Cronin

Kim Davidson

Paul Debevec, VES

Debbie Denise

Karen Dufilho

Paul Franklin

Barbara Ford Grant

David Johnson, VES

Jim Morris, VES

Dennis Muren, ASC, VES

Sam Nicholson, ASC

Lori H. Schwartz

Eric Roth

Tom Atkin, Founder

Allen Battino, VES Logo Design

VISUAL EFFECTS SOCIETY

Nancy Ward, Executive Director

VES BOARD OF DIRECTORS

OFFICERS

Kim Davidson, Chair

Susan O’Neal, 1st Vice Chair

Janet Muswell Hamilton, VES, 2nd Vice Chair

Rita Cahill, Secretary

Jeffrey A. Okun, VES, Treasurer

DIRECTORS

Neishaw Ali, Alan Boucek, Kathryn Brillhart

Colin Campbell, Nicolas Casanova

Mike Chambers, VES, Emma Clifton Perry

Rose Duignan, Gavin Graham

Dennis Hoffman, Brooke Lyndon-Stanford

Quentin Martin, Maggie Oh, Robin Prybil

Jim Rygiel, Suhit Saha, Lopsie Schwartz

Lisa Sepp-Wilson, David Tanaka, VES

David Valentin, Bill Villarreal

Rebecca West, Sam Winkler

Philipp Wolf, Susan Zwerman, VES

ALTERNATES

Andrew Bly, Fred Chapman

John Decker, Tim McLaughlin

Ariele Podreider Lenzi, Daniel Rosen

Visual Effects Society

5805 Sepulveda Blvd., Suite 620

Sherman Oaks, CA 91411

Phone: (818) 981-7861 vesglobal.org

VES STAFF

Elvia Gonzalez, Associate Director

Jim Sullivan, Director of Operations

Ben Schneider, Director of Membership Services

Charles Mesa, Media & Content Manager

Eric Bass, MarCom Manager

Ross Auerbach, Program Manager

Colleen Kelly, Office Manager

Mark Mulkerron, Administrative Assistant

Shannon Cassidy, Global Manager

P.J. Schumacher, Controller

Naomi Goldman, Public Relations

BLENDING CG CREATURES & WILDLIFE PHOTOGRAPHY TO BRING LOST GIANTS TO LIFE

By TREVOR HOGG

A quarter century ago, the landmark production Walking with Dinosaurs was released by BBC Studios Science Unit, Discovery Channel and Framestore. The six-part nature docuseries pushed the boundaries of computer animation to envision the iconic prehistoric beasts in a more realistic fashion than the expropriated Hollywood DNA of Jurassic Park and sentimental cartoon cuteness of The Land Before Time. Building upon the nature documentary television miniseries are Netflix and Apple, which partnered with BBC Studios Natural History Unit, Silverback Films, paleontologists Dr. Tom Fletcher and Dr. Darren Naish, narrators Sir David Attenborough and Morgan Freeman, cinematographers Jamie McPherson, Paul Stewart and David Baillie and digital creature experts ILM and MPC to do a natural fusion of photorealistic CGI and wildlife photography to produce Life on Our Planet and Prehistoric Planet

OPPOSITE TOP TO BOTTOM: The giraffe-sized flying predator Hatzegopteryx courting on its own love island in the “Islands” episode of Prehistoric Planet 2. (Image courtesy of Apple Inc.)

The T-Rex was clever, as revealed by a large brain, so its young were doubtless curious and even playful, so Cinematographer Paul Stewart imagined this scene in the “Coasts” episode of Prehistoric Planet 1 (Image courtesy of Apple Inc.)

GSS and RED Monstro camera on location in Morocco to film Terrorbirds hunting for Life on Our Planet. (Image courtesy of Jamie McPherson)

Shooting CG rather than live-action creatures was educational for Jamie McPherson, Visual Effects Director of Photography for Life on Our Planet, who previously took a GSS (Gyro-Stabilizer System) normally associated with helicopters, attached it to a truck and captured wild dogs running 40 miles per hour in Zambia for the BBC One documentary series The Hunt. “I never did visual effects before this, so it was a big learning curve for me, and ILM never tried to do visual effects in a style that we came up with, which was to make it feel like a high-end, blue-chip documentary series. It was also sitting alongside Natural History. If you’re doing pure visual effects, you’ve got more leeway of people not seeing how those two worlds mix. In terms of the creatures, the process

TOP: A herd of Mammoths walk across the wintry landscape. (Image courtesy of Netflix)

was incredibly long. We worked out what the creature was going to be between the producer, director, myself, ILM and Amblin. Then you have to work out how it interacted with another creature of the same or different species. You have all of these parameters that you’re trying to blend together and make them feel believable. When we were out filming the back plates for this, we made sure that they felt reactive, so we were careful to work out where the creature was going. As for behavior, we had to dial it back from what I filmed in the real world because we didn’t want to break that believability and take people out of the moment of them noticing a crazy camera move, like a T-Rex walking over you.”

Being able to rely upon predetermined CG creatures provided the opportunity to utilize the best of narrative and Natural History cinematography. “To me, it’s a drama, and you don’t give the audience anything more by pretending that you’re in a hide 160 million years ago with a long lens,” observes David Baillie, Director of Photography for Prehistoric Planet and Life on Our Planet. “It’s more important to tell the story.” Coloring his perspective is that Baillie continually shifts between narrative projects like Munich: The Edge of War and Natural History productions such as Frozen Planet. “My job as a cinematographer is to tell the story with all of the emotion, and I do that by using focal length, camera movement and framing.” Some limitations need to be respected. “We’re in a location that we maybe haven’t recce before. There is a bit of rock and river and you say, ‘Let’s do this.’ Everybody says, ‘That looks nice.’ Then, the Visual Effects Supervisor will say, ‘Using that shot will cost another £100,000 because we’ve planned it the other way.’

That can be quite frustrating.” A slightly different mental attitude had to be adopted. “I have to be more disciplined with things like changing focal length and stop. If I’m doing a documentary or even a drama, I might think, ‘I’ll tighten it up a bit here.’ But they’ve already done one pass on the motion control,” Baillie notes. Everything had to be choreographed narratively and visually before principal photography commenced. “To ensure our plates matched the action we wanted, we had reference previsualization video prepared by the animators for every backplate we planned to shoot,” remarks Paul Stewart, writer, Producer and Director of Photography for Prehistoric Planet, who won Primetime Emmy Awards for The Blue Planet, Planet Earth and Planet Earth II “Using accurate scale ‘cut-out’ models, or [for the big ones] poles on sticks, we could see how big the dinosaur was in the actual scene. Sometimes shockingly huge! Knowing size helped us figure out the speed and scope of any camera moves; we would capture a ‘good plate’ then plates where we mocked up environmental interactions like kicking dirt when running, brushing bushes and picking up twigs. We also tried where possible to isolate foreground elements using bluescreen. In some cases, we even used beautifully made blue puppets and skilled puppeteers to create complex interactions; for example, a baby Pterosaur emerging from a seaweed nest. This all went back to the MPC wizards, together with LiDAR scans and photogrammetry, to make the magic happen.”

There was a lot of unforeseen rethinking later about how things worked out. “For instance, when the Natural History Unit is on location, it’s one guy with the camera shooting for maybe months,” explains Kirstin Hall, Visual Effects Supervisor at MPC. “There’s no DIT [Digital Imaging Technician] or a script, and he’s not even taking notes on his cards. We didn’t think about that as a reality. When we showed up with our huge crew, and all of these shots

TOP TO BOTTOM: According to Cinematographer Paul Stewart, “Prehistoric animals are a mix of the familiar and strange.”

This large predatory Pterosaur evolved into a lifestyle similar to some modern storks. (Image courtesy of Apple Inc.)

An example of a full CG shot from the “Coasts” episode of Prehistoric Planet 1. (Images courtesy of Apple Inc.)

Aerial photography for the “Oceans” episode of Prehistoric Planet 2, which was captured in Northern Sweden. (Image courtesy of Apple Inc.)

that we had to get and things we had to do, it was a huge shock for everybody involved. It caused us to think, ‘We need to plan this a different way. How are we going to get the data?’ Also, they need to shoot a script, whereas most of the narratives in Natural History come in the edit. Because we wanted to keep it as holistic as possible, the biggest thing for us was working together and becoming one healthy team. The NHU started doing their own charts, and we did HDRIs on the side. The shoots became like clockwork for Prehistoric Planet 2.”

Even with the experience of working on The Lion King and The Jungle Book, MPC still had room for improvement when it came to depicting CG creatures in a naturalistic and believable manner. “We had to get up to speed on animal behaviors and instinct and tailor our whole kit to that, like the lenses and cameras we used and filming off-speed; everything is slightly slow-motion, about 30 fps,” Hall states. “In Prehistoric Planet 2, we went 100 fps or more, which is hard to do with feathers and fur, but it helped us to get the full experience of these blue-chip Natural History Unit productions.” It was not until Jurassic World Dominion that feathered dinosaurs appeared in a Hollywood franchise, but this was not the case for Prehistoric Planet, “We knew from the beginning we would have to [do feathers] for it to be scientifically accurate,” Hall explains. “We were lucky enough to work with Darren Naish and didn’t realize how integrated he would be in our team. It felt like Darren was a member of MPC because he was in every asset and animation review. If something was not authentic in how something moves or blinks, we would catch it early on and rectify going forward. We learned a lot, even with the plants. When shooting on location, we made sure to rip out holly, and we couldn’t film on grass because it did not exist [during the Late Cretaceous period].”

Some unexpected artistic license was taken given the nature

TOP TO BOTTOM: ILM put a lot of detail into the CG dinosaurs, as showcased by this image of a T-Rex. (Image courtesy of Netflix)

Cinematographer David Baillie used a helicopter to capture aerial photography for Prehistoric Planet 2. (Image courtesy of David Baillie)

of the subject matter of Life on Our Planet. “We tried to be as authentic as possible, certainly in the cinematography,” remarks Jonathan Privett, Visual Effects Supervisor at ILM. “Where it varied because we could control what the creatures did, there are quite a few places where we used continuity cuts that you wouldn’t be able to do if you were shooting Natural History because it would have required multiple cameras which they rarely use. We didn’t start off like that. It was a bit of a journey. From the outset, we said we wouldn’t do that. However, there’s something about the fact that creatures are not real even though they look real, which led to a sense of being slightly odd that you wouldn’t do those continuity cuts when you watched the edits back; so we ended up putting them in.”

Essentially, the virtual camera kit emulated the physical one. “We shot a lot of it on the Canon CN20, which is a 50mm to 1000mm lens,” Privett states. “Jamie has a doubler [lens extender] that can make it 1500mm. An incredible bit of kit. We also used a Gyro-Stabilizer System. The process is the same as if we were making a feature. We took Jaime’s GSS and shot lens grids for it. It’s optically quite good because it’s quite long, so everything is quite flat, but we mirrored the distortion, and inside the GSS is a RED camera, so that is a relatively standard thing. The other $300,000 worth of equipment is the stabilization bit, and our traditional methods of camera tracking work fine for that. The hard bit is we never use lenses that long. In a drama, nobody is breaking out the 600mm lens. That’s quite interesting to have to deal with because you probably don’t have much information. It could be a blurry mass back there, so our brilliant layout department managed to deal with those well.”

“What made my hair go gray is the language of wildlife photography and doing long, lingering close-ups of creatures,” Privett laughs. “You’re right in there, so there’s nowhere to hide in terms of your modeling and texturing work. We had to spend a lot of time on the shapes. For instance, a lizard has a nictitating membrane, so when closing its eye all the muscles around it move, and actually the whole shape of the face almost changes. We had to build all of those into the models probably in more detail than we would normally expect.” The image is more compressed as well. “Generally, the crane is panning with the GSS, and Jamie will counter-track around the creature so you get this great sense of depth. You can also see the air between you and the subject because you’re so far away from it. Any kind of temperature gradient shows up as heat haze in the image. In some of the shots, we’re warping the final rendered image to match it with what’s happening in the background because you can get some crazy artifacts,” Privett remarks.

There was lots of creativity but less freedom. “The fusion of science with the creatives at MPC paid off in a spectacular way,” Stewart notes. “Creativity could never come at the expense of accuracy, and surprises and beauty had to be hard-baked into the sequences rather than serendipitously revealed in the course of filming. Giving ourselves hard rules about what could and could not happen in the animal world helped set limits and improve the believability of the films. We might have wanted the animal to

TOP TO BOTTOM: A Morturneria breaks the water surface courtesy of MPC for Prehistoric Planet 2 (Image courtesy of Apple Inc.)

Cinematographer Paul Stewart filming on a beach in Wales for a Prehistoric Planet sequence involving a Pterosaur beach. (Image courtesy of Paul Stewart)

A hovercraft brings in a proxy 3D-printed dinosaur head to allow Cinematographer David Baillie to properly frame a shot for Prehistoric Planet 2 (Image courtesy of David Baillie)

jump or run, but the bones tell us it could not, so it didn’t. I even found myself checking the craters on the moon for any we should erase because they happened in the last 65 million years! There was also the matter of cost. We could never afford to make all the creatures we wanted or to get the creatures to do everything we would have liked. Interaction with water, vegetation, even shadows and the ground, required huge amounts of art and render time to get right but would be hardly noticed by the audience. We got savvy quickly at how to get impact without costing the sequence out of existence. But the thrill of recreating a world that disappeared so long ago never dulled. Even the scientists and reviewers said they soon forgot they were watching animation,” Stewart says.

Standard visual techniques had to be rethought. “The easiest way to explain it is, if I’m filming a tiger in the jungle, I would want to be looking at it so you get a glimpse into its world,” McPherson explains. “I tend to shoot quite a long lens and make all of the foliage in the foreground melt so you’re looking through this kaleidoscopic world of this tiger walking through a forest. But you can’t do that with a visual effects creature because they can’t put the creature behind melty, out-of-focus foliage. The best example is the opening shot of Episode 101 of Life on Our Planet of a Smilodon walking through what looks to be grass. There is a lot of grass in front and behind it. The only way to achieve that was to shoot where the creature was going to be on this plate. You shoot it once clean. Then we add in and shoot multiple layers of out-of-focus grass and then those shots are all composited together so it looks like the creature is walking behind the grass, and we match the frame speed, which then makes it feel like you’re looking into that world.”

Having limitations is not a bad thing. “There are restrictions, but they also make you more creative,” McPherson observes. “You have to overcome the limitations of a limited number of shots by making sure that every shot works together and tells the story in the best possible way.” The usual friend or foe had to be dealt with throughout the production. “Because of weather, some shoots were hard, which had nothing to do with visual effects,” Baillie states. “We had to do some stormy cliff shots in Yesnaby, Scotland, and had winds of nearly 100 miles per hour, which was great for crashing waves. In Sweden, when we were doing the ice shots for the ‘Oceans’ episode of Prehistoric Planet, it was great to begin with because it was -28°C, but there weren’t any holes in the ice. We nearly flew to Finland to try to find one. Then overnight the temperature went up to 3°C, the wind picked up and all of the ice broke up and melted, and we couldn’t find any ice without a hole!” Dealing with the requirements for visual effects led to some surreal situations. “The Pterosaur cliffs sequence was one of the most complex sequences because it involved shoots on land, sea, aerial and practical effects,” Stewart recalls. “Animation Supervisor Seng Lau worked with me in the field to help direct the plate work, and it was a fun collaboration. Watching our Smurfblue baby puppet Pterosaurs emerge from their seaweed nests was a bonding moment!”

TOP TO BOTTOM: Proxies ranging from 3D-printed heads to cut-outs were allowed Paul Stewart to frame shots properly for Prehistoric Planet. (Image courtesy of Paul Stewart)

Cinematographer Paul Stewart describes, “mimicking other cameras like thermal cameras” to point out that many dinosaurs were warm-blooded and insulated by feathers. (Image courtesy of Apple)

Cinematographer Jamie McPherson on location to film Komodo dragons with the cine-buggy. (Image courtesy of Jamie McPherson)

REALISTIC FACIAL ANIMATION: THE LATEST TOOLS, TECHNIQUES AND CHALLENGES

By OLIVER WEBB

BOTTOM: Vicon’s CaraPost single camera tracking system tracks points using only a single camera. Single-camera tracking works automatically when only one camera can see a point. If a point becomes visible again in two cameras, the tracking reverts to multi-camera tracking. (Image courtesy of Vicon Motion Systems Ltd. UK)

Realistic facial animation remains a cornerstone of visual effects, enabling filmmakers to create compelling characters and immersive storytelling experiences. Facial animation has come a long way since the days of animatronics, as filmmakers now have access to a choice of advanced facial motion capture systems. Technologies such as performance capture and motion tracking, generative AI and new facial animation software have played a central role in the advancement of realistic facial animation. World-leading animation studios are utilizing these tools and technologies to create more realistic content and breaking new ground in the way characters are depicted.

A wide range of facial capture technology is in use today. One of the pioneers of facial animation technology is Vicon’s motion capture system, which was vital in its use in films such as Avatar and The Lord of the Rings trilogy. Faceware is another leading system that has been used in various films, including Dungeons & Dragons: Honor Among Thieves, Doctor Strange in the Multiverse of Madness and Godzilla vs. Kong, as well as games such as Hogwarts Legacy and EA Sports FC 24. ILM relies on several systems, including the Academy Award-winning Medusa, which has been a cornerstone of ILM’s digital character realization. ILM also pioneered the Flux system, which was created for Martin Scorsese’s The Irishman, as well as the Anyma Performance Capture system, which was developed with Disney Research Studios. DNEG, on the other hand, uses a variety of motion capture options depending on the need. Primarily, DNEG uses a FACSbased system to plan, record and control the data on the animation rigs. “However, we try to be as flexible as possible in what method we use to capture the data from the actors, as sometimes we could be using client vendors or client methods,” says Robyn Luckham, DNEG Animation Director and Global Head of Animation.

TOP: Timothée Chalamet and mini Hugh Grant in Wonka. When it came to generating a CG version of an actor as recognizable as Hugh Grant for Wonka, Framestore turned to sculptor and facial modeler Gabor Foner to better understand the quirks of muscular activation in the actor’s face, eventually developing a formula for re-creating facial performances. (Image courtesy of Warner Bros. Pictures)

Developing a collaborative working understanding of the human aspects, nuances and complexities and the technology was particularly challenging for Framestore on 2023’s Wonka. “Part of that was building an animation team that had a good knowledge of how FACS [Facial Action Coding System] works,” remarks Dale Newton, Animation Supervisor at Framestore. “While as humans we all have the same facial anatomy, everyone’s face moves in different ways, and we all have unique mannerisms. When it came to generating a CG version of an actor as recognizable as Hugh Grant, that raised the bar very high for us. At the core of the team was sculptor and facial modeler Gabor Foner, who helped us to really understand the quirks of muscular activation in Hugh’s face, [such as] what different muscle combinations worked together with what intensities to use for any particular expression. We ended up with a set of ingredients, recipes if you like, to re-create any particular facial performance.”

Masquerade3 represents the next level of facial capture technology at Digital Domain. “This latest version brings a revolution to facial capture by allowing markerless facial capture without compromising on quality,” Digital Domain VFX Supervisor Jan Philip Cramer explains. “In fact, it often exceeds previous standards. Originally, Masquerade3 was developed to capture every detail of an actor’s face without the constraints of facial markers. Utilizing state-of-the-art machine learning, it captures intricate details like skin texture and wrinkle dynamics. We showcased its outstanding quality through the creation of iconic characters such as Thanos and She-Hulk for Marvel. Eliminating the need for markers is a natural and transformative progression, further enhancing our ability to deliver unmatched realism. To give an example of the impact of this update; normally, the CG actor has to arrive two hours early on set to get the markers applied. After

TOP TO BOTTOM: ILM’s Flux system, which was created for Martin Scorsese’s The Irishman, allows filmmakers to capture facial data on set without the need for traditional head-mounted cameras on the actor. (Image courtesy of ILM)

Vicon’s Cara facial motion capture system. (Image courtesy of Vicon Motion Systems Ltd. UK)

Faceware’s Mark IV Wireless Headcam System for facial capture. (Image courtesy of Faceware Technologies Inc.)

TOP: ILM relies on the Academy Award-winning Medusa system, a cornerstone of ILM’s digital character realization, the Anyma Performance Capture system, which was also developed with Disney Research Studios, and the Flux on-set system. (Image courtesy of ILM)

BOTTOM: Wētā FX’s FACET system was developed primarily for Avatar, where it provided input to the virtual production technology. Other major facial capture projects include The Hobbit trilogy and the Planet of the Apes trilogy. (Image courtesy of Wētā FX)

each meal, they have to be reapplied or fixed. The use of COVID masks has made this issue infinitely worse. On She-Hulk, each day seemed to have a new marker set due to pandemic restrictions, and that caused more hold-ups on our end. So, we knew removing the markers would make a sizeable impact on production.”

Ensuring that the emotions and personalities of each character are accurately conveyed is critical when it comes to mastering realistic facial animation. “The process would loosely consist of capture, compare, review and adjust,” Luckham explains. “It would be a combination of the accuracy of the data capture, the amount of adjustments we would need to make in review of the motion capture data against the actor’s performance and then the animation of the character face rig against the actor’s performance, once that data is put onto the necessary creature/character for which it is intended. Once we have done as much as we can in motion capture, motion editing and animation, we would then go into Creature CFX – for the flesh of the face, skin folds, how the wrinkles would express emotion, how the blood flow on a face would express color in certain emotions – to again push it as close as we can to the performance that the actor gave. After that would be lighting, which is a huge part of getting the result of facial animation and should never be overlooked. If any one of these stages are not respected, it is very easy to not hit the acting notes and realism that is needed for a character.”

For Digital Domain, the most important aspect of the process is combining the actor with the CG asset. “You want to ensure all signature wrinkles match between them. Any oddity or unique feature of the actor should be translated into the CG version,” Cramer notes. “All Thanos’ wrinkles are grounded in the actor Josh Brolin. These come to life especially in his expressions, as the wrinkle lines created during a smile or frown exactly match the

actor. In addition, you don’t want the actor to feel too restricted during a capture session. You want them to come across as natural as possible. Once we showed Josh that every nuance of his performance comes through, he completely changed his approach to the character. Rather than over-enunciating and overacting, he underplayed Thanos and created this fantastic, stoic character. This is only possible if the actor understands and trusts that we are capturing the essence of his performance to the pixel.”

Performance capture is a critical aspect of realistic facial animation based on human performance. “Since Avatar, it has been firmly established as the go-to setup for realistic characters,” Cramer adds. “However, there are unique cases where one would go a different route. On Morbius, for instance, most faces were fully keyframed with the help of HMCs [head-mounted cameras], as they had to perfectly match the actor’s face to allow for CG transitions. In addition, some characters might need a more animated approach to achieve a stylistic look. But all that said, animation is still needed. We get much closer to the final result with Masquerade3, but it’s important to add artistic input to the process. The animators make sure the performance reads best to a given camera and can alter the performance to avoid costly reshoots.”

For Framestore’s work on Disney’s Peter Pan & Wendy, the facial animation for Tinker Bell was generated through a mixture of facial capture elements using a head-mounted camera worn by the actress Yara Shahidi. “This, then, underwent a facial ‘solve,’ which involves training deep learning algorithms to translate her facial motion onto our facial rigs,” Newton says. “The level of motion achieved by these solves required experienced animators to tighten up and finesse the animation in order to achieve the quality level for VFX film production. In contrast, performance

through a mixture of

elements using a head-mounted camera worn by the actress

which then underwent a facial solve involving deep learning algorithms trained to translate her facial motion onto Framestore’s CG facial rig. (Image courtesy of Walt Disney+)

BOTTOM: Masquerade3 was developed by Digital Domain to capture every detail of an actor’s face without the constraints of facial markers, as showcased through the creation of iconic characters such as Thanos from the Avengers films. All Thanos’ wrinkles are grounded in the actor Josh Brolin. (Image courtesy of Marvel)

TOP: On Disney’s Peter Pan & Wendy, Framestore’s facial animation for Tinker Bell was generated

facial capture

Yara Shahidi,

capture on Wonka meant that we had good visual reference for the animators working on the Oompa Loompa as voiced by Hugh Grant. Working with Hugh and [co-writer/director] Paul King, we captured not only Hugh’s performance in the ADR sessions but also preparatory captures, which allowed us to isolate face shapes and begin the asset build. We had a main ARRI Alexa camera set up that he performed towards. Additionally, we had a head-mounted 1k infrared camera that captured his face and a couple of Canon 4k cameras on either side of him that captured his body movements.”

According to Oliver James, Chief Scientist at DNEG, generative AI systems, which can generate data resembling that which they were trained on, have huge potential applications in animation. “They also have the potential to create huge legal and ethical problems, so they need to be used responsibly,” James argues. “Applied to traditional animation methods, it’s possible to generate animation curves which replicate the style of an individual, but can be directed at a high level. So instead of having to animate the motion of every joint in a character over time, we could just specify an overall motion path and allow an AI system, trained on real data, to fill in the details and generate a realistic full-body animation. These same ideas can be applied to facial motion too, and a system could synthesize animation that replicated the mannerisms of an individual from just a high-level guide. Face-swapping technology allows us to bypass several steps in traditional content creation, and we can produce photorealistic renderings of new characters driven directly from video. These techniques are typically limited by the availability of good example data to train the networks on, but this is being actively tackled by current research, and we’re already starting to see convincing renders based on just a single reference image.”

Newton suggests that given how finely tuned performances in film VFX are today, it will take some time for AI systems to become useful in dealing with more than the simplest animation blocking. “A personal view on how generative AI is developing these days – some companies create software that seems to want to replace the artist. A healthier attitude, one that protects the artists and, thereby, also the business we work in at large, is to focus AI development on the boring and repetitive tasks, leaving the artist time to concentrate on facets of the work that require aesthetic and creative input. It seems to me a safer gamble that future creative industries should have artists and writers at their core, rather than machines,” Newton says.

For Digital Domain, the focus has always been to marry artistry with technology and make the impossible possible. “There is no doubt that generative AI will be here to stay and utilized everywhere; we just need to make sure to keep a balance,” Cramer adds. “I hope we keep giving artists the best possible tools to make amazing content. Gen AI should be part of those tools. However, I sure hope gen AI will not be utilized to replace creative steps but rather to improve them. If someone with an artistic background can make fancy pictures, imagine how much better an amazing artist can utilize those.”

There has been a surge in new technologies over the past

TOP TO BOTTOM: Masquerade3 represents the next level of facial capture technology at Digital Domain by allowing markerless facial capture while maintaining high quality. (Image courtesy of Digital Domain)

YouTube creators of “The Good Times are Killing Me” experiment with face capture for their custom characters. Rokoko Face Capture for iOS captures quality facial expressions on the fly. It can be used on its own or alongside Smartsuit Pro and Smartgloves for full-body motion capture. (Image courtesy of Rokoko)

Rokoko Creative Director Sam Lazarus playing with Unreal’s MetaHuman Animator while using the Headrig for iPhone face capture. (Image courtesy of Rokoko)

few years that have drastically helped to improve realistic facial animation. “The reduced cost and complexity of capturing and processing high resolution, high frame rate and multi-view video of a performance have made it easier to capture a facial performance with incredible detail and fidelity,” James says. “Advances in machine learning have made it possible to use this type of capture to build facial rigs that are more expressive and lifelike than previous methods. Similar technology allows these rigs to perform in real-time, which improves the experience for animators; they can work more interactively with the rig and iterate more quickly over ideas. Real-time rendering from game engines allows animators to see their work in context: they can see how a shadow might affect the perception of an expression and factor that into their work more effectively. The overall trend is away from hand-tuned, hand-sculpted rigs, and towards real-time, datadriven approaches.”

Cramer supports the view that both machine learning and AI have had a serious impact on facial animation. “We use AI for high-end 1:1 facial animation, let’s say, for stunts. This allows for face swapping at the highest level and improves our lookdev for our 3D renders. In addition, we can control and animate the performance. On the 3D side with Masquerade3, we use machine learning to generate a 4D-like face mask per shot. Many aspects of our pipeline now utilize little training models to help streamline our workflow and make better creative decisions.”

On Peter Pan & Wendy, Framestore relied on advanced facial technology to capture Tinker Bell. “We worked with Tinker Bell actress Yara Shahidi who performed the full range of FACS units using OTOY’s ICT scanning booth. The captured data was solved onto our CG facial rig via a workflow developed using a computer vision and machine learning-based tracking and performance retargeting tool,” Newton details. “This created a version of the facial animation for Tinker Bell the animators could build into their scenes. This animation derived from the solve required tightening up and refinement from the animators, which was layered on top in a non-destructive way. This workflow suited this show, as the director wanted to keep Yara’s facial performance as it was recorded on the CG character in the film. Here, the technology was very useful in reducing the amount of the time it might have taken to animate the facials for particular shots otherwise.”

Concludes Luckham. “For facial capture specifically, I would say new technologies have generally improved realism, but mostly indirectly. It’s not the capturing of the data literally, but more how easy we can make it for the actor to give a better performance. I think markerless and camera-less data-capturing is the biggest improvement for actors and performances over technology improvements. Being able to capture them live on set rather than on a separate stage, adds to the filmmaking process and the involvement of the production. Still, at the moment, I think the more intrusive facial cameras and stage-based capturing does get the better results. Personally I would like to see facial capture become a part of the on-set production, as the acting you would get from it would be better. Better for the actor, better for the director and better for the film as a whole.”

TOP TO BOTTOM: DNEG primarily uses a FACS (Facial Action Coding System) based system to plan, record and control the data on the animation rigs, but tries to be as flexible as possible in what method they use to capture the data from the actors, as sometimes they could be using client vendors or client methods. (Images courtesy of DNEG)

Creating Ariel’s digital double for The Little Mermaid was one of the most complicated assets Framestore ever built. It involved replacing all of Halle Bailey’s body, at times also her face, into her mermaid form.

(Image courtesy of Walt Disney Studios)

On Morbius, where Masquerade3 was used for facial capture, most faces were fully keyframed with the help of HMCs, as they had to perfectly match the actor’s face to allow for CG transitions. (Image courtesy of Digital Domain and Columbia Pictures/Sony)

FORMING A STRONG BOND WITH RACHAEL PENFOLD

By OLIVER WEBB

Rachael Penfold grew up in Ladbroke Grove in West London where she attended the local comprehensive school. “At the time, it was less of an institute for education and more like a social experiment,” Penfold reveals. “A symptom of the same problems we see today because of the lack of funding for state education. A small example, but it’s easy to see why diversity is a problem in the wider film industry.”

Penfold didn’t initially study to become a visual effects artist, instead she learned on the job. “I had the best apprenticeship really,” Penfold says. “It was at the Computer Film Company (CFC), a pioneering digital film VFX company. I think they may have been the first in the U.K. It was a sort of melting pot of scientists, engineers, filmmakers and artists. It was weird and fun and pretty unruly to be honest, but always and without compromise, it was about image quality. I obviously made a half-decent impression as a runner at CFC and was moved to production assistant. Either that or they simply needed bodies in production. So, in that sense, I caught a lucky break with my entry into the industry.”

Penfold’s lucky break came in 1997 with the British fantasy film Photographing Fairies, serving as Visual Effects Producer. This was followed by other CFC projects, which saw Penfold work as a Visual Effects Producer on acclaimed films such as Tomorrow Never Dies, Spice World, The Bone Collector, Mission: Impossible II, Chicken Run and Sexy Beast. Penfold’s last CFC project was the 2002 film Resident Evil for which she was Head of Production.

In 2004, Penfold co-founded London visual effects studio One of Us alongside Dominic Parker and Tom Debenham, where she currently serves as Company Director. Working across TV and film, One of Us currently has a capacity for over 300 artists, but is looking to expand across more exciting projects. One of Us also launched its Paris studio in 2021, which houses 70 artists. The company won Special, Visual & Graphics Effects at the 2022 BAFTA TV Awards for their work on Season 2, Episode 1 of The Witcher, and in 2022 they were also nominated for Outstanding Visual Effects in a Photoreal Feature at the VES Awards for their work on The Matrix Revolutions. Some of their recent work includes Damsel, The Sandman, Fantastic Beasts: The Secrets of Dumbledore and Bridgerton, Season 2.

Images courtesy of Rachael Penfold, except where noted.

TOP: Rachael Penfold, Co-Founder and Company Director, One of Us

OPPOSITE TOP: Some of the work that One of Us completed for Damsel included digi-doubles, burnt swallows, armor swords, dragon fire, melting and cracking ice and huge-scale cave environments and DMP set extensions. (Image courtesy of Netflix)

OPPOSITE MIDDLE, LEFT TO RIGHT: The One of Us leadership team. From left: Tom Debenham, Rachael Penfold and Dominic Parker. One of Us won the BAFTA Craft Award in 2018 for their work on The Crown.

Penfold at the 2022 Emmy Awards where One of Us was nominated for Special Visual Effects in a Single Episode for Episode 1 of The Man Who Fell to Earth as well as for Special Visual Effects in a Season or a Movie for The Witcher, Season 2.

OPPOSITE BOTTOM: The Zone of Interest won Best International Feature Film at the 96 th Academy Awards and was also nominated for Best Picture. (Image courtesy of A24)

Setting up the company was a very organic process for Penfold. “We set up a small team to deal with the visual development of a particular project,” Penfold says. “We kept ourselves small for quite a few years, always wanting to engage with more of the outsider work. It was great fun, lots of risk, and my two partners, Dominic Parker and Tom Debenham, are also two wonderful friends. We still work so closely together.”

Penfold’s first film as One of Us Visual Effects Producer was the 2007 film When Did You Last See Your Father? starring Jim Broadbent and Colin Firth. Since launching One of Us, Penfold has worked on an array of projects including The Tree of Life, Cloud Atlas, Under the Skin, Paddington, The Revenant and The Alienist. One of Us also served as the leading vendor on the Netflix original series The Crown. Their work for the show included digital set extensions, environments, crowd replication and recreating Buckingham Palace. They have also contributed to key scenes throughout the series, including Queen Elizabeth and Prince Philip’s Royal Wedding of 1947, the funeral of

King George VI and subsequent coronation of Queen Elizabeth II. Penfold served as Visual Effects Executive Producer across 20 episodes. The company’s work on the show helped them gain more recognition after winning the BAFTA Craft Award in 2018 for their work on the show, as well as receiving an Emmy nomination in 2017 for Outstanding Special Visual Effects in a Supporting Role for their work on Season 1 of the show and again in 2018 for Season 2. The company ethos of One of Us is one of creative intelligence and the ability to select and adapt ways of approaching practical problems involved in bringing ideas to life. Since their launch in 2004, their work has truly reflected these values.

Choosing a favorite visual effect shot from her oeuvre, however, is an almost impossible task for Penfold. Boasting an impressive catalog of award-winning films and series, it’s easy to understand.

“I definitely have favorite work, but not always because it’s the

MIDDLE: One of Us enjoyed its creative involvement with Mirror Mirror (2012). (Image courtesy of Relativity Media)

BOTTOM LEFT: Penfold and Jonathan Glazer at the premiere of Under the Skin at the 70th Venice International Film Festival in Venice in 2013. (Photo: Aurore Marechal)

BOTTOM RIGHT: Penfold taught a masterclass in VFX for “Becoming Maestre – A springboard for a new generation of professionals in cinema and seriality” in Rome in 2023. The mentoring program, aimed at Italian female audiovisual talent, was conceived and developed by Accademia del Cinema Italiano, David di Donatello Awards and Netflix as part of the Netflix Fund for inclusive creativity. (Image courtesy of Accademia del Cinema Italiano)

biggest or most ambitious. There’s so much that makes an experience great, not just the outcome, but the journey and who you go on that journey with,” Penfold explains. “But, from a professional pride perspective, Damsel is a huge achievement.”

Some of the impressive work that One of Us completed for the film included digi-doubles, burnt swallows, armor swords, dragon fire, melting and cracking ice, huge-scale cave environments and DMP set extensions. “Again, I can’t choose a favorite shot from the film,” Penfold explains. “There are so many massive shots in that film, but the shot where the dragon flattens the knight underfoot feels like the culmination of many years of hard work, growing One of Us to the point where it can take on the toughest challenges. From a simple aesthetic perspective, I love the work we did on Mirror Mirror – a perfectly told story in a beautifully designed world.”

Another recent project that Penfold is particularly proud of is The Zone of Interest, directed by her partner Jonathan Glazer. The film marks their third feature collaboration after Sexy Beast (2000) and Under the Skin (2013). The Zone of Interest follows Rudolf Höss, the commandant of Auschwitz, and his wife, Hedwig, as they strive to build a dream life for their family besides the extermination camp that Höss helped to create. “Sometimes you are proud to be associated with a project because it’s a great piece of filmmaking, even if our work is a relatively minor contribution,” Penfold remarks. “I’m very proud to have been a part of The Zone of Interest. I believe it is an important film. There are also 660 visual effects shots in it, and I’m delighted that no one knows that!”

On the experience of setting up a company in a male-dominated industry, Penfold explains that the important thing is to look after the work and look after the people and the rest should follow. “Parity/ equality is best served by looking after your people – and by looking after all people so you create an environment where everyone can thrive. Key roles for women, and a diverse team, will come through a genuine commitment to value everyone which, in turn, will enrich everything that we do,” Penfold states.

VFX tools and technology never stop developing, and there have been many advancements since the beginning of Penfold’s career. “Thinking back to the rudimentary tools we used to have – massive clunking hardware that seemed to constantly fall over or fail. The most basic software… we have come a really long way,” she notes. “Many of today’s tools are designed to improve long-established techniques. The craft is being reinvigorated by new technologies in better and more exciting ways. Some technologies are completely new, and we are learning how to use them. But, as consumers, we have an irrepressible desire to examine our own humanity, one way or another. I can’t see a world where human storytellers are not at the heart of creating and bringing to life our own stories.”

For Penfold, that humanity is key to her definition of success. “Without question, the thing that I enjoy most about my role is the absolutely wonderful people I work with. Whether that’s internally – our teams, or whether that’s part of the ‘film family’ – these endeavors are often hard and long and unknown. So, you really do form extremely strong bonds. Producing exciting imagery is thrilling; it’s a real buzz. So, to do that as part of a tight-knit team is rewarding in so many ways.”

TOP: Penfold’s first film as the Visual Effects Producer for One of Us was the 2007 film When Did You Last See Your Father? starring Jim Broadbent and Colin Firth. (Image courtesy of Sony Pictures)

PROGRAMMING THE ILLUSION OF LIFE INTO THE WILD ROBOT

By TREVOR HOGG

What happens when a precisely programmed robot has to survive the randomness of nature? That is the premise that allowed author Peter Brown to bring a fresh perspective to the ‘fish-out-of-water’ scenario that captured the attention of filmmaker Chris Sanders and DreamWorks Animation. Given the technological innovations that were achieved with The Bad Guys and Puss in Boots: The Last Wish, the timing proved to be right to adapt The Wild Robot in a manner that made distinguishing the concept art from the final frame remarkably difficult.

“When I read the book for the first time, I was struck by how deep the emotional wavelengths were, and I became concerned that if the look of the film wasn’t sophisticated enough, people would see it as too young because of the predominance of animals and the forest setting,” explains director/writer Chris Sanders. “We’ve always talked about Hayao Miyazaki’s forests, which are beautiful, sophisticated, immersive and have depth. We wanted that same feeling for our film.”

Achieving that desired visual sophistication meant avoiding the coldness associated with CG animation. “I’m absolutely thrilled about the analog feel that we were able to revive,” Sanders notes. “It’s one of the things that I’ve missed the most about traditional animation. The proximity of the humans who created it makes the look resonate. When you have hand-painted backgrounds, the artist’s hand is evident; there’s a warmth and presence that you get. When we moved into full CG films, we immediately got these wonderful gifts, like being able to move the camera in space and change lenses. However, we also lost that analog warmth and things got cold for a while.” The painterly approach made for an interesting discovery. “A traditionally done CG tree is a structure that has millions of leaves stuck to it, and we’re fighting to make those leaves not look repetitive. Now we’re able to have someone

Images courtesy of DreamWorks Animation and Universal Pictures.

TOP: Central to the narrative is the parental relationship between Roz and Brightbill. A shift to the island perspective occurs when the otters discover Roz.

paint a tree digitally. They can relax the look and make it more impressionistic. The weird and interesting thing is, it looks more realistic to my eye,” Sanders remarks.

CG animation was not entirely avoided as a visual aesthetic, in particular when illustrating the character arc of the islandstranded ROZZUM Unit 7134 robot, which goes by the nickname ‘Roz.’ “From the first frame of the movie to the last frame, Roz is dirtier and growing things on her,” notes Visual Effects Supervisor Jeff Budsberg. “But if you look at the aesthetic of how we render and composite her, there is a drastic difference. Roz is much looser. You’ll see brushstroke highlights and shadow detail removed just like a painter would. We slowly introduce those things over the course of the movie. When the robots are trying to get her back, it becomes a jarring juxtaposition; she now fits with the world around her while the robots have that more CG look to them.”

Something new to DreamWorks is authoring color in the DCI-P3 color space rather than SRGB. Budsberg explains, “It allows us a wider gamut of available hues because we wanted to feel more like the pigments that you have available as a painter. It allows us to hit way more saturated things than we’ve been able to do at DreamWorks before. The same with the greens. They’re so much richer in hue. Maybe to the audience, it’s imperceptible, but the visceral experience is so much more impactful.”

Elevating the themes of The Wild Robot is the shape language. “We’ve always loved the potential that Roz was going to be a certain fish out of water, and that influenced the design,” notes Production Designer Raymond Zibach. “The simple circular clean, the way that we know a lot of our technology from the iPhone to the Roomba, everything is simple shapes, whereas nature is jagged or pretty with flowers, but everything is asymmetrical.” The trio of Nico Marlet, Borja Montoro and Genevieve Tsai were responsible

Eliminating facial articulation was an important part in making Roz a believable and endearing robot.

TOP TO BOTTOM: The migration scene pushed the crowd simulations to the limit.

Storyboards depicting the interaction of Roz with Brightbill.

“It’s limiting not to have a mouth, but that’s red meat for us as animators because that’s when we start to imagine and emphasize pantomime. We looked at Buster Keaton, who has very little facial expression, but his body language conveys all of the emotions, as well as other masters of pantomime and comedy, Charlie Chaplin and Jacques Tati. There is definitely comedy in this film, but all of it is grounded in some form of reality.”

—Jakob Jensen, Head of Character Animation

BOTTOM: As the story

for the character designs. “All three of them were studying animals and doing the almost classic Disney approach, like when they studied deer for Bambi. Our style landed somewhere in-between a realistic drawing and a slightly pushed style. You can see that in the character, Fink. How big his tail is to how pointy his face is. Those things are born out of an observation that Nico made on all of his fox drawings. We have quite a few species. I heard somebody say 60, but it’s because we have quite a few birds, so maybe that’s why it ended up being that many,” Zibach says.

While the watercolor paintings Tyrus Wong did for Bambi influenced the depiction of the island, a famous industrial designer was the inspiration for the technologically advanced world of the humans and robots. “Syd Mead is the father of future design from the 1960s through the 1980s,” Zibach observes. “The stuff before Blade Runner was optimistic. We wanted to bring that sense to our human version of the future, which to the humans is optimistic. However, for the animals, it’s not a great place for them. That ended up being such a great fit.” As for the robots, Ritche Sacilioc [Art Director] did the final designs for Roz, RICOs and Vontra.

“Ritchie designed most of the future world except for a neighborhood that I did. Ritchie loves futurism, and you can see it reflected in all of those designs because he also helped matte painting do all

TOP: Photorealism was not the aim of the visuals, but rather to create the warmth associated with handcrafted artwork.

progresses, the visual aesthetic of Roz becomes less slick and more like the rugged surrounding environment.

of the cityscapes. I couldn’t be happier because I always wanted to work on a sci-fi movie in animation, and this is my first one. We got to blow the doors off to do cool stuff.”

Tools were made to accommodate the need for wind to blow through the vegetation. “In Doodle, where you can draw whatever foliage assets that you want, we have Grasshopper, which can build rigs to deform these plants on demand, and you can build more physical rigs of geometry that don’t connect or flowers that are floating,” Budsberg explains. “We can build different rigs at various wind speeds, or you can do hero-generated geometry.” Water was tricky because it could not look like a fluid simulation. “It’s one thing to make the water, but how do you make the water look like a painter painted it? It’s not good enough to make physically accurate water. You have to take a step back to be able to dissect it: How would Hayao Miyazaki paint this or draw that splash? How would a Hudson River painter detail out this river? You have to forget what you know about computer-generated water and rethink how you would approach some of those problems. You want to make sure that you feel the brushstrokes in that river. Look at the waterfall shot where the water starts to hit the sunlight; you feel that the brushstrokes of those ripples are whipping through the river. There’s a little Miyazaki-style churn of the water that is drawn. Then the splashes are almost like splatter paint. It’s an impressionistic version of water that allows the audience to make it their own. You don’t want to see every single micro ripple or detail,” Budsberg remarks.

A conscious decision was made not to give Roz any facial articulation to avoid her appearing ‘cartoony.’ “It’s limiting not to have a mouth, but that’s red meat for us as animators because that’s when we start to imagine and emphasize pantomime,” states Jakob Jensen, Head of Character Animation. “We looked at Buster Keaton, who has very little facial expression, but his body language conveys all of the emotions, as well as other masters of pantomime and comedy, Charlie Chaplin and Jacques Tati. There is comedy in this film, but all of it is grounded in some form of reality.”

It was also important to incorporate nuances that revealed a lot about the characters and added to their believability. “One of our Chief Supervising Animators, Fabio Lignini, was the supervisor of Roz chiefly, but also was with me from the beginning in developing the animal stuff. He did so much wonderful work that was so inventive and grounded in observations of how otters behave. We would show the story department, ‘This is what we’re thinking.’ That would then make them pivot from doing a lot of anthropomorphic hand-acting of certain creatures that was not the direction Chris Sanders or our animation department felt that it should go in. By seeing what we were doing, they adapted their storyboards, because you have to stage your shot. How do otters swim? There is so much fun stuff to draw from nature, so why not use that?”

Locomotion had to be understood to the degree that it became second nature to the animator. “The animal starts walking and trots over here, or maybe gallops and then stops,” Jensen explains. “For that to become second nature for an animator, you have to study hard, and you’ll see shots where it’s amazing how the team

TOP TO BOTTOM: Roz teaches Brightbill how to fly.

Skies play a significant role in establishing the desired tone for scenes.

The forest environment was inspired by animation legend Hayao Miyazaki.

The lodge Roz helped to construct serves as a refuge for wildlife during a nasty winter.

did it because you never pay attention to it. You just believe it. That was my first pitch to Chris Sanders as to how far I saw the animation approach and style. I wanted the animation to disappear into the story and for no one to concentrate on the fact that we are watching animation. We’re just watching the story and characters.” Crowds were problematic. Jensen comments, “Sometimes, we have an immense number of characters who couldn’t only be handled by the crowds department. We threw stuff to each other

TOP: Effects are added to depict Roz fritzing out in the forest.

BOTTOM: An animation storyboard that explores the motion of Roz.

all of the time. But in order to even have a session in our Premo software that would allow for more than five to 10 characters in the shot, they had to come up with all kinds of solutions to have a low-resolution version of the character that could be viewed while animating all of the other characters because there are a ton of moments when they all interact with each other.”

Animation tends to have quick cuts which was not appropriate for The Wild Robot. “From the start, it became evident that we needed time [to determine] how to express that in a way when you don’t have a final image to say, ‘See this is gorgeous and you’re going to want to be here,’” notes Editor Mary Blee. “We were using tricks and tools like taking development art and using After Effects to put characters in it moving, or getting things on layers to say, ‘She is walking through the forest. You guys are going to be interested one day, but it’s hard to see right now.’ There was a lot of invention to get across the flavor, tone and pace of the movie. We had a couple of previs shots, one in particular where Roz stands on top of the mountain and sees the entirety of the island. Chris Stover [Head of Cinematography Previz/Layout], with help from art, made that up out of nothing before we had a sequence.” Boris FX was utilized to create temporary effects. “The first sequence I cut was Roz being chased by a bear, falling down a mountain and discovering that she has destroyed a goose nest and there is only an egg left. We needed to show that her computer systems were failing, so we made a fake HUD and started adding effects to depict it fritzing out and an alarm going off. That sequence was amazing because it encapsulated what the movie was going to be, which was a combination of action, stress, excitement, devastation, sadness and silence. All that happens within two and a half minutes,” Blee says.

Illustrating scenes involving crowds was a major task for the

previs team. “We have a lot of naturalistic crowds whether it was all of the animals in the lodge, the migration scenes, flying flocks of birds and the forest fire with all of the animals running,” Stover notes. “For the geese, it was fairly simple. It was like a sea of birds. When you are looking at moments like the lodge, it was impactful for the audience to understand that there were a lot of animals that were going to be affected if Roz didn’t step in and help the island over this harsh winter.” Each of the three ‘oners’ were complex to execute. Stover explains, “Those types of shots were often tricky because I don’t want anybody to ever look at it and go, ‘That’s a single shot. It’s really cool.’ What we want to do is allow you to be with that character for an extended period of time in a way that would ground you to that character’s challenges, as well as the cinematic moment that we’re trying to achieve in the storytelling.” Throughout the story, various camera styles were adopted. “At the beginning, we’re on a jib arm and it feels controlled. It wasn’t until we created the shot where the otters jump into the water and pop up that we then realized we are now in the island’s point of view. The wildness of the island became a loose camera style. The acting was going to drive the camerawork. When Vontra tries to lure Roz onto the ship, we use this flowy camera style. We let the action move in a much more dynamic way. The camera feels deliberate in its choices. That sensibility of being deliberate versus the sensibility of reactionary camerawork was the contrast that we had to play with throughout the film.”

To create the illusion of spontaneity, close attention was paid to the background characters. “At one point, a pair of dragonflies are behind Roz,” Sanders states. “I never sat down and said, ‘I must have dragonflies.’ This was something that was built in as people were working on it. It was perfectly placed and was so well thought through because it looked believable. Things fly in; they’re asymmetrical, off to the side and dart out. It feels like the dragonflies flew through a shot that we were shooting with a camera on that day.”

Jensen is partial to the character of Fink. “That was one of the first characters that we developed that was supervised by Dan Wagner [Animation Supervisor], who is a legend. I liked animating him because it’s difficult to do a fox. They don’t quite move like dogs or cats. It’s not even an in-between. It’s something interesting.” Even moments of silence were carefully considered, such as during the migration scene where Brightbill is at odds with his surrogate mother Roz before he flies away with a flock of geese, which was aided by a shifted line of dialogue that reinforces the idea that things are not good between them.

“If we have done our job, there is storytelling in the silence,” Blee observes. “It’s not just a pause or beat. It’s the weight of what we’ve all had in our lives when we needed to say something to somebody, but we can’t. It’s awkward and difficult, and there are too many emotions. Hundreds of storyboards were drawn to try to get that moment right over time. Because we don’t want it to sit there and use exposition to have people just talk to explain, ‘You’re supposed to be feeling this right now.’ No. It’s a lot of work to make it so you don’t have to say anything but the audience understands what’s happening.”

with different

Concept art for the pivotal sequence where Roz aids Brightbill in joining a flock of migrating geese.

Concept art of Roz and Fink attempting to survive a brutal snowstorm, which served as a visual template for the painterly animation style.

Roz appears to be oblivious to a looming wave as her attention is focused on the coastline activities of crabs.

Depicting the size and scale of Roz in comparison to the beach formations.

TOP TO BOTTOM: Experimenting

shape languages for the character design of Roz.

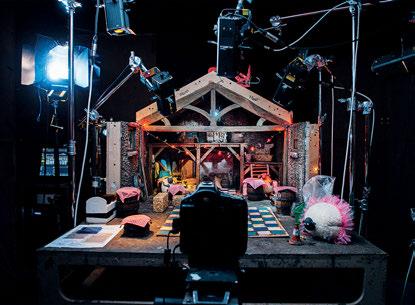

UNSUNG HEROES: SFX ARTISTS KEEP IT REAL IN A DIGITAL WORLD

By OLIVER WEBB

The role of the special effects artist is to create an illusion, practically or digitally, to enhance the storytelling. Practical effects include the use of makeup, prosthetics, animatronics, pyrotechnics and more, while digital effects rely on computer-generated imagery. With the two mediums having blended together in more recent years, the role of the special effects artist is often overlooked. Following are just a few of the many artists working today who are responsible for providing audiences with outstanding effects and immersing us in the world of film. From concept designers to special effects supervisors, they are working tirelessly behind the scenes to make movie magic happen.

Neil Corbould, VES, Special Effects Supervisor

TOP: Dominic Tuohy was nominated for an Academy Award for his visual effects work on The Batman (2022). (Image courtesy of Dominic Tuohy)

OPPOSITE TOP: Neil Corbould supervised this train-over-the-cliff effect for Mission: Impossible – Dead Reckoning Part One (2023). (Image courtesy of Neil Corbould and Paramount Pictures)

OPPOSITE BOTTOM: Neil Corbould feels that practical effects are “in a great place,” and he embraces all the new technologies that come along that add to his toolkit of options. (Image courtesy of Neil Corbould)

My uncle, Colin Chilvers, was the Special Effects Supervisor on Superman back in 1978. Being a big fan of Superman, I bugged Colin into taking me to see some of the sets. The first set he showed me was the ‘fortress of solitude’ built on the 007 stage at Pinewood Studios. I arrived just at the right time to see Superman aka Christopher Reeves flying down the length of the stage on wire through the smoke, mist and dry ice. From that moment on, I knew that I wanted a job in special effects. After Superman, I went on to work on Saturn 3, which starred Kirk Douglas and Farah Fawcett Majors, then Superman 2. After that, I started working with a company called Effects Associates Ltd., run by Martin Gutteridge. This was an amazing place to learn the art of practical effects both in small and large-scale productions.

I feel that practical effects are now in a great place. I embrace all the new technologies that come along, and I am always on the lookout for the next generation of machines and materials I can use and integrate into my special effects work. YouTube has been a great source of information for me. Whenever I have any free

time, I search through many clips on the platform, and it’s amazing what people come up with. Then I try to figure out how I can use it. With the influx of streaming platforms and the need for product, I have seen a surge in the need for more practical effects personnel. This has meant an increase of crew coming through the ranks at a fast pace, which has been a concern of mine here in the U.K. To be graded as a special effects supervisor takes a minimum of 15 years. During these 15 years, you need to have completed a certain number of movies in the various grades: trainee, assistant technician, technician, senior technician and then on to supervisor. This is the same with regards to pyrotechnic effects, which has similar criteria, with added independent explosion and handling courses that need to be completed before you are allowed to handle and use pyrotechnics and explosives. There is a worry that some have been fast-tracked through the system, which is where my concern lies. Because of the nature of the work we do, safety is paramount. You need to have completed the time and have the experience to say ‘This is not right.’ This comes over time and spent working with seasoned supervisors who have been in the industry a long time. We need to create a safe working environment for everyone who is in and around a set.

Dominic Tuohy, Special Effects Artist

If you look at when I first started, everything we did was in-camera, i.e. filmed for real. It was harder back then – nearly 40 years ago –than it is today because we had almost no CGI and relied on matte painting and miniature work, etc. That’s where the saying, ‘It’s all smoke and mirrors’ comes from.

Now the goal is to create a seamless collaboration between special effects and visual effects so that you don’t question the effects within the film. Visual effects always have this problem: If you say to someone “Draw me an explosion,” your image will be

different from mine. Whereas, if I create a real explosion, you, the audience, will accept it, and it grounds the film in reality. That’s the starting block of the ‘smoke and mirrors’ moment. Now, using the existing SFX explosion, visual effects can augment that image to fit the shot and continue to convince the audience it’s real. All of this cannot be achieved without a great team effort, and that’s something the British Film Industry has in abundance.” [Note: Tuohy won the Academy Award in 2020 for Best Achievement in Visual Effects for his work on 1917.]

Nick Rideout, Co-Founder and Managing Director, Elements Special Effects

I am one of the few of my generation who wanted to be in SFX from an extremely early age. I was completely taken by Star Wars and Hammer [Film Productions] movies and wanted to be involved in making monsters and models for the film industry. I was lucky enough to go to art school and had the good fortune of a very honest tutor who pointed out that at best I was an under-average

sculptor, but shouldn’t let that stop me from pursuing a career in effects work as it is such a varied department. With that, it was a matter of writing a lot of letters and hanging in there once given a chance within a physical effects department. Weather effects, mechanical rigs, fire and pyrotechnics, it felt like the greatest job ever – still does most days. I worked hard in the teams that I was part of and was fortunate enough to be alongside some of the best technicians of the time, who took the time to explain the how and why along with giving me the chance to express my ideas. It’s a long road as no two days are ever the same, and even now I’m not sure when you become an expert in the field as it is a constant learning curve.

With all HETV, the challenges start with script expectation, director’s vision versus the schedule, budget and location. The challenges can be so varied, the physicality of getting equipment onto a location, grade-one listed buildings, not having the means to test prior to the shooting day, all this before cast and cameras are present.

There are so many times that I am proud of my crew and their accomplishments. Without romanticizing our department, the pressure to deliver on the day is huge with nowhere to hide when the cameras are turning. Concentration, expertise and, at times, sheer grit get these effects over the line. Film and television production is always evolving and reinventing. SFX is at times an old technology but remains able to integrate itself with the most modern of techniques. That’s not to say we have not developed alongside the rest of the industry, but we will always be visual and physical.

Max Chow, Concept Designer, Wētā Workshop I didn’t know much about SFX. I wanted to try different stuff, and it just so happened that Wētā Workshop had opportunities. Because I’m quite new to this, learning about the harmony between physical manufacturing and the digital pipeline is very important. It is exciting to play a role or part in any of these projects at Wētā Workshop. I think these limits make it relatable to us and give a realistic feel, which I think we all appreciate. Being fairly new to special effects, one of the most challenging things was storyboarding for Kingdom of the Planet of the Apes. Seeing how great those storyboards were, I had to try my best to match that standard while learning how important pre-visualization and boarding are for VFX.

John

It’s easy to get lost and want to reinvent things and put in alternate creative designs – putting a lot of yourself in it, but we have to pull back. We have to trust in our textiles and leather workers, because they know every stitch, every seam, better than us. We have to design with the realities of SFX in mind. Later, when something is made wet, when there is cloth physics applied to a design, or when rigging is applied in VFX, there’s no doubt that it works, because it worked in real life. As VFX progresses, SFX is used in tandem to progress at the same rate – we are using new materials, workflows, tech and pipelines for physical manufacture. The world has started to demand more of the unseen in theaters, like Avatar, Kingdom of the Planet of the Apes and Godzilla vs. Kong, where we’re getting fully made-up worlds and less human ones. We

TOP TO BOTTOM: John Richardson is proud of his work on all the Harry Potter films (2001-2011). (Image courtesy of Warner Bros. Pictures)

Dominic Tuohy won the Academy Award in 2020 for Best Achievement in Visual Effects for his work on 1917 (Image courtesy of Universal Pictures)

Richardson worked on the Bond film Licence To Kill (1989). (Image courtesy of John Richardson, Danjaq LLC and MGM/UA)

pay to see something that we can’t experience in real life. As that evolves, as we demand greater entertainment, there’s more work needed to ground these films and make everything a spectacle but also believable.

Iona Maria Brinch, Senior Concept Artist, Wētā Workshop Being a fan of The Lord of the Rings books and films, and fascinated by Wētā Workshop’s work on the films, it was a thrill when I was introduced to Wētā Workshop Co-founder Richard Taylor, VES, through a friend while I was backpacking through New Zealand. He saw my paintings, pencil sketches and wood carvings that I had been creating on the road, and invited me in. I began as an intern, working through different departments before eventually landing a role in the 3D department. From there, I worked my way into our design studio, initially doing costume designs for Avatar: The Way of Water, working closely with Costume Designer Deborah Scott. I tend to lean towards the more organic and elegant side of things when designing, such as the Na’vi costumes, which have a natural feel to them. So, working on the designs with Deborah Scott for the RDA [Resources Development Administration], who are a military unit in Avatar, was a fun challenge, from having to consider hard surfaces, to thinking about futuristic yet realistic-looking gear. I was part of the team that designed a lot of the female characters’ clothing in Avatar, like Tsireya, Neytiri and Kiri, with Deborah Scott. Getting to properly dive into the world of Pandora, then eventually see my designs go through our costume department, where they’d build them physically, was incredible. They brought it to a completely new level. You can’t take ownership of a design – it’s a collaboration that goes through so many hands, which is an absolute joy to see and be a part of.